Image Captioning using ResNet-50 and Flickr8k Dataset

This project develops an image captioning model using the ResNet-50 architecture and the Flickr8k dataset, capable of generating descriptive captions for images leveraging deep learning techniques.

Category:

Sub-category:

Deep Learning

Visual Search

Overview:

This project aims to develop an image captioning model using the ResNet-50 architecture and the Flickr8k dataset. By leveraging the power of deep learning and convolutional neural networks, we create a model that can generate descriptive captions for images. The ResNet-50 pre-trained on the ImageNet dataset serves as the backbone for feature extraction, while the Flickr8k dataset provides the necessary image-caption pairs for training and evaluation.

Description:

The Image Captioning using ResNet-50 and Flickr8k project focuses on building a model capable of generating accurate and meaningful captions for images. The model utilizes the ResNet-50 architecture, a powerful deep learning model pre-trained on the vast ImageNet dataset. This architecture serves as a feature extractor, enabling the model to capture high-level visual features from input images.

The Flickr8k dataset is employed for training and evaluation. It consists of approximately 8,000 images, each paired with five human-generated captions. This dataset offers a diverse range of images and associated captions, providing the necessary training material to teach the model how to associate visual content with textual descriptions.

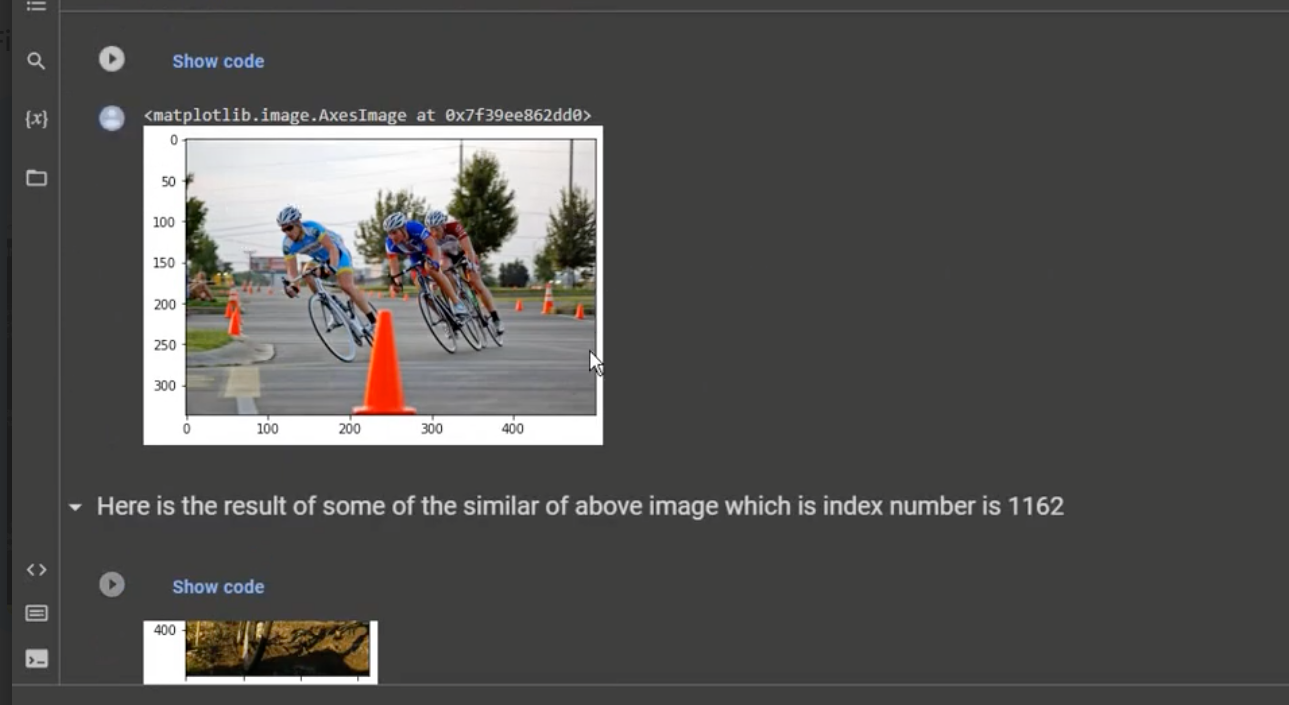

The visual search model implemented in this project offers a range of practical applications, revolutionizing the way we interact with visual data. By utilizing advanced computer vision techniques, this model enables efficient and accurate retrieval of information from images and videos, opening up new possibilities in various domains.

Dataset: Flickr8k

Pre-trained Model: ResNet-50

Programming Language: Python

Deep Learning Framework: keras